Some children have already died and only a minority who inherit the mutation will escape cancer in their lifetimes.

Category Archives: Wellness Live

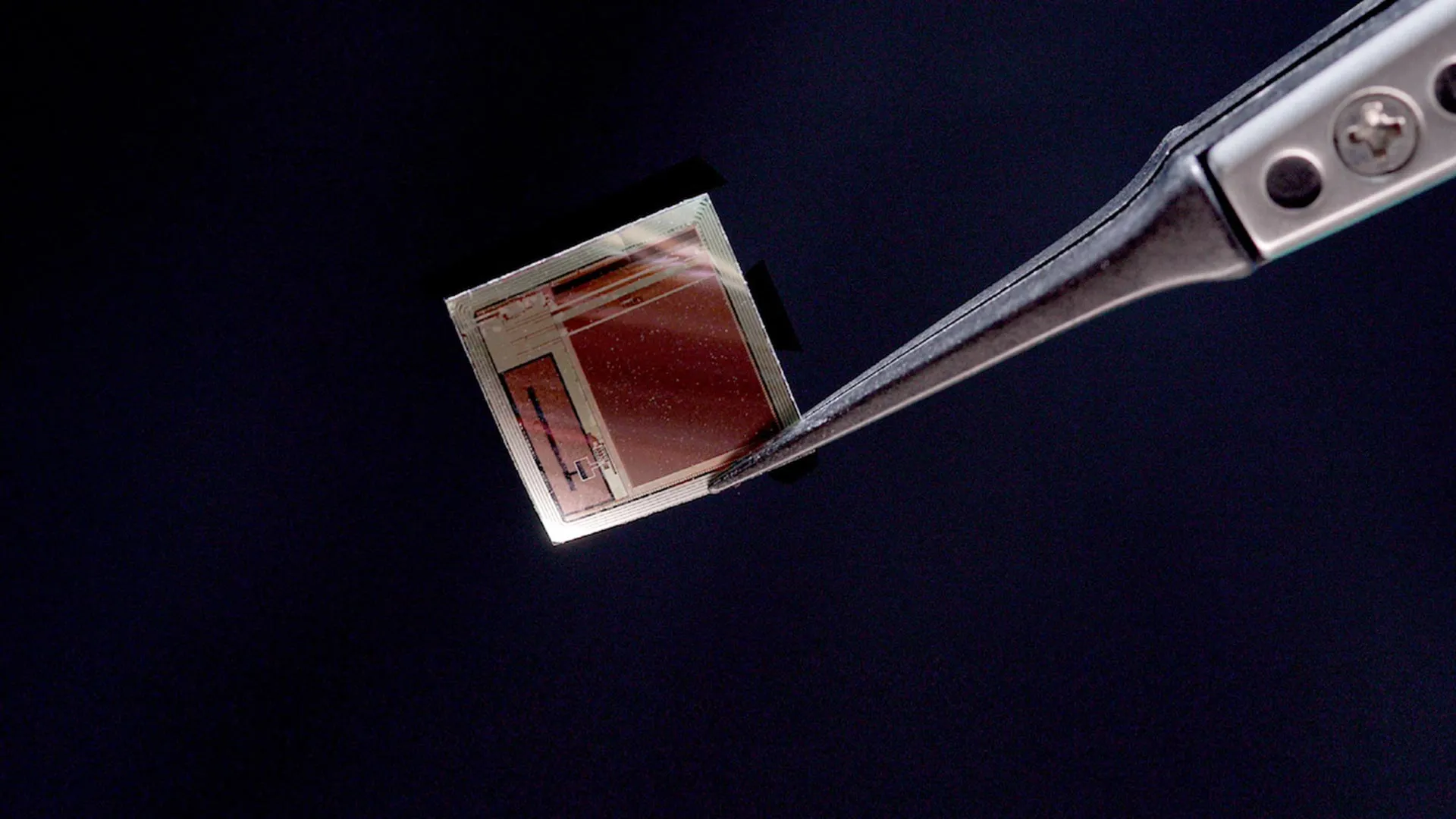

Scientists reveal a tiny brain chip that streams thoughts in real time

A new brain implant could significantly reshape how people interact with computers while offering new treatment possibilities for conditions such as epilepsy, spinal cord injury, ALS, stroke, and blindness. By creating a minimally invasive, high-throughput communication path to the brain, it has the potential to support seizure control and help restore motor, speech, and visual abilities.

The promise of this technology comes from its extremely small size paired with its ability to transmit data at very high speeds. Developed through a collaboration between Columbia University, NewYork-Presbyterian Hospital, Stanford University, and the University of Pennsylvania, the device is a brain-computer interface (BCI) built around a single silicon chip. This chip forms a wireless, high-bandwidth link between the brain and external computers. The system is known as the Biological Interface System to Cortex (BISC).

A study published Dec. 8 in Nature Electronics outlines BISC’s architecture, which includes the chip-based implant, a wearable “relay station,” and the software needed to run the platform. “Most implantable systems are built around a canister of electronics that occupies enormous volumes of space inside the body,” says Ken Shepard, Lau Family Professor of Electrical Engineering, professor of biomedical engineering, and professor of neurological sciences at Columbia University, who served as one of the senior authors and led the engineering work. “Our implant is a single integrated circuit chip that is so thin that it can slide into the space between the brain and the skull, resting on the brain like a piece of wet tissue paper.”

Transforming the Cortex Into a High-Bandwidth Interface

Shepard worked closely with senior and co-corresponding author Andreas S. Tolias, PhD, professor at the Byers Eye Institute at Stanford University and co-founding director of the Enigma Project. Tolias’s extensive experience training AI systems on large-scale neural recordings, including those collected with BISC, helped the team analyze how well the implant could decode brain activity. “BISC turns the cortical surface into an effective portal, delivering high-bandwidth, minimally invasive read-write communication with AI and external devices,” Tolias says. “Its single-chip scalability paves the way for adaptive neuroprosthetics and brain-AI interfaces to treat many neuropsychiatric disorders, such as epilepsy.”

Dr. Brett Youngerman, assistant professor of neurological surgery at Columbia University and neurosurgeon at NewYork-Presbyterian/Columbia University Irving Medical Center, served as the project’s main clinical collaborator. “This high-resolution, high-data-throughput device has the potential to revolutionize the management of neurological conditions from epilepsy to paralysis,” he says. Youngerman, Shepard, and NewYork-Presbyterian/Columbia epilepsy neurologist Dr. Catherine Schevon recently secured a National Institutes of Health grant to use BISC in treating drug-resistant epilepsy. “The key to effective brain-computer interface devices is to maximize the information flow to and from the brain, while making the device as minimally invasive in its surgical implantation as possible. BISC surpasses previous technology on both fronts,” Youngerman adds.

“Semiconductor technology has made this possible, allowing the computing power of room-sized computers to now fit in your pocket,” Shepard says. “We are now doing the same for medical implantables, allowing complex electronics to exist in the body while taking up almost no space.”

Next-Generation BCI Engineering

BCIs function by connecting with the electrical signals used by neurons to communicate. Current medical-grade BCIs typically rely on multiple separate microelectronic components, such as amplifiers, data converters, and radio transmitters. These parts must be stored in a relatively large implanted canister, placed either by removing part of the skull or in another part of the body like the chest, with wires extending to the brain.

BISC is built differently. The entire system resides on a single complementary metal-oxide-semiconductor (CMOS) integrated circuit that has been thinned to 50 μm and occupies less than 1/1000th the volume of a standard implant. With a total size of about 3 mm3, the flexible chip can curve to match the brain’s surface. This micro-electrocorticography (µECoG) device contains 65,536 electrodes, 1,024 recording channels, and 16,384 stimulation channels. Because the chip is produced using semiconductor industry manufacturing methods, it is suitable for large-scale production.

The chip integrates a radio transceiver, a wireless power circuit, digital control electronics, power management, data converters, and the analog components necessary for both recording and stimulation. The external relay station provides power and data communication through a custom ultrawideband radio link that reaches 100 Mbps, a throughput at least 100 times higher than any other wireless BCI currently available. Operating as an 802.11 WiFi device, the relay station effectively bridges any computer to the implant.

BISC incorporates its own instruction set along with a comprehensive software environment, forming a specialized computing system for brain interfaces. The high-bandwidth recording demonstrated in this study allows brain signals to be processed by advanced machine-learning and deep-learning algorithms, which can interpret complex intentions, perceptual experiences, and brain states.

“By integrating everything on one piece of silicon, we’ve shown how brain interfaces can become smaller, safer, and dramatically more powerful,” Shepard says.

Advanced Semiconductor Fabrication

The BISC implant was fabricated using TSMC’s 0.13-μm Bipolar-CMOS-DMOS (BCD) technology. This fabrication method combines three semiconductor technologies into one chip to produce mixed-signal integrated circuits (ICs). It allows digital logic (from CMOS), high-current and high-voltage analog functions (from bipolar and DMOS transistors), and power devices (from DMOS) to work together efficiently, all of which are essential for BISC’s performance.

Moving From the Lab Toward Clinical Use

To transition the system into real-world medical use, Shepard’s group partnered with Youngerman at NewYork-Presbyterian/Columbia University Irving Medical Center. They developed surgical procedures to place the thin implant safely in a preclinical model and confirmed that the device produced high-quality, stable recordings. Short-term intraoperative studies in human patients are already underway.

“These initial studies give us invaluable data about how the device performs in a real surgical setting,” Youngerman says. “The implants can be inserted through a minimally invasive incision in the skull and slid directly onto the surface of the brain in the subdural space. The paper-thin form factor and lack of brain-penetrating electrodes or wires tethering the implant to the skull minimize tissue reactivity and signal degradation over time.”

Extensive preclinical work in the motor and visual cortices was carried out with Dr. Tolias and Bijan Pesaran, professor of neurosurgery at the University of Pennsylvania, both recognized leaders in computational and systems neuroscience.

“The extreme miniaturization by BISC is very exciting as a platform for new generations of implantable technologies that also interface with the brain with other modalities such as light and sound,” Pesaran says.

BISC was developed through the Neural Engineering System Design program of the Defense Advanced Research Projects Agency (DARPA) and draws on Columbia’s deep expertise in microelectronics, the advanced neuroscience programs at Stanford and Penn, and the surgical capabilities of NewYork-Presbyterian/Columbia University Irving Medical Center.

Commercial Development and Future AI Integration

To move the technology closer to practical use, researchers at Columbia and Stanford created Kampto Neurotech, a startup founded by Columbia electrical engineering alumnus Dr. Nanyu Zeng, one of the project’s lead engineers. The company is producing research-ready versions of the chip and working to secure funding to prepare the system for use in human patients.

“This is a fundamentally different way of building BCI devices,” Zeng says. “In this way, BISC has technological capabilities that exceed those of competing devices by many orders of magnitude.”

As artificial intelligence continues to advance, BCIs are gaining momentum both for restoring lost abilities in people with neurological disorders and for potential future applications that enhance normal function through direct brain-to-computer communication.

“By combining ultra-high resolution neural recording with fully wireless operation, and pairing that with advanced decoding and stimulation algorithms, we are moving toward a future where the brain and AI systems can interact seamlessly — not just for research, but for human benefit,” Shepard says. “This could change how we treat brain disorders, how we interface with machines, and ultimately how humans engage with AI.”

The Nighttime Routine Scientists, Dentists, And Longevity Experts Swear By

Longevity expert after longevity expert has said that the steps to a longer life are somewhat familiar, even boring; a good diet, enough sleep, and adequate physical activity are key.

Advertisement

But exciting research is happening within those. Which is why some scientists have advised on everything from when you eat your dinner to the best bedtime for better ageing.

Here, we’ll share some studies which might make your nighttime routine as conducive as possible for the best, and even most longevity-boosting, results:

Speaking to GQ, Valter Longo, director of the Longevity Institute at the University of Southern California, said that the longest-living people he’s tracked stopped eating 12 hours before breakfast the following day.

Advertisement

That may be, he said, because digesting food may interrupt your sleep and could mean food is stored in a different way.

So, if you’re an eight-hour sleeper, that could mean you stop eating four hours before you sleep and have breakfast right away.

Or you could stop eating three hours before sleep and wait an hour after waking to have brekkie.

Gum disease has been linked to a range of health issues, from heart conditions to tooth loss, irritable bowel syndrome (IBS), and even depression.

Advertisement

We don’t know exactly whether worse gum health comes from people having preexisting health conditions, which can make looking after your teeth harder, or if they actually cause the problems to begin with.

But speaking to HuffPost UK, Dr Jenna Chimon, a cosmetic dentist at Long Island Veneers, explained that gums are “living tissue connected directly to your bloodstream… bacteria and the toxins they release create a constant state of inflammation”.

Low-grade chronic inflammation has been linked to faster ageing and worse health outcomes.

So while again, we still don’t know exactly in which direction the gum health/all-body health connection flows, experts reccomend flossing anyway ― worst case scenario, you’ll have happier gums.

Advertisement

A 2024 paper listed sleep regularity as a “stronger predictor of mortality” than even sleep duration.

That means that when you go to bed might be more important than how long you sleep when it comes to your risk of death, though having either way too much or way too little sleep is also linked to an increased risk of premature death in the same paper.

Advertisement

Speaking to HuffPost UK previously, registered dietician and longevity specialist Melanie Murphy Richter, who studied under longevity researcher Dr Valter Longo at the University of Southern California, said, “Sleep is one of the most powerful longevity tools we have, and timing matters.

“Going to bed between 10pm and midnight and waking with the sun supports circadian rhythms, hormone balance, and cellular repair – all critical for healthy ageing,” she added.

It is true that some of us have a later chronotype, or a natural “night owl” body clock.

But a 2024 study by Stanford researchers suggested that no matter your natural preference, sleeping after 1am was linked to worse ageing outcomes.

Advertisement

“To age healthily, individuals should start sleeping before 1am, despite chronobiological preferences,” they wrote.

‘Lammy Must Ditch Plan To Scrap Jury Trials Or Face Embarrassing Defeat’, Warns Senior Labour MP

David Lammy’s forced announcement last week after the apparent accidental leak of his plans to scrap some jury trials came as a shock to Labour backbenchers.

There was reference in our election manifesto to addressing the Crown Court backlog which grew exponentially under the previous government, but there was never any suggestion that we, the Labour Party, would ever consider doing away with the rights of those accused of serious crimes to be tried by a jury of 12 good men, women and true.

Advertisement

Not least when current justice ministers, including the Lord Chancellor, have made very public statements pleading the case for juries in criminal proceedings, to be maintained. After all, juries have existed in the English (and Welsh) legal system for over 800 years.

Threatening to restrict jury trials is both a dereliction of duty and an ineffective way of dealing with a crippling backlog of cases. The erosion of jury trials not only risks undermining a fundamental right, but importantly, will not reduce the backlog by anything like enough to speed up justice for victims and those that are accused and prosecuted by the Crown.

If this ever comes to the House of Commons, I will rebel and vote against it, and I think the government would be defeated on this issue. The House and the public will not stand for the erosion of a fundamental right, particularly given that there are more effective ways to reduce the backlog.

Advertisement

Sir Brian Leveson is a well-respected figure whose words carry much weight but even Sir Brian is not wedded to this idea. But the outcry from stakeholders in the criminal justice system must not be ignored.

Our system rightly prevents the judiciary from speaking out on such matters, but when you have the Bar Council and the Criminal Bar Association united in their opposition to these destructive plans, then it is easy to work out what judges and recently-retired judges are saying to lawyers when they are speaking privately.

Houses of Parliament

Advertisement

These warnings need to be heard and acted upon before it is too late. Let’s be honest now, the problem (which is massive) was not caused by juries and it will not be solved by their removal. If this is not ditched, then the government risks another embarrassing defeat.

Labour MPs deserve better from the prime minister to have us marched up the proverbial hill to be marched back down again and then have us pretend that we were never asked to do the unthinkable in the first place. Parliamentarians from across the political divide recognise the constitutional importance of trial by jury and the danger of their erosion from public life.

Backbench MPs see this as a step too far, and no responsible parliament can allow a cornerstone of justice and our democracy to be savagely attacked on the basis that the government is actually doing something to fix the problem when in fact anybody that is anybody, practitioners, academics or the judiciary itself know full well that these plans will not do what it says on the tin and will most definitely not protect and promote the interests of victims of crime.

Advertisement

“If this is not ditched, then the government risks another embarrassing defeat.”

The Lord Chancellor would be better promising less and doing more. There is much to do. The government chief whip is a good and well-respected MP, but he isn’t Paul Daniels – the chief whip’s best magic trickery cannot magic the inevitable rebellion away.

One of the primary causes of the backlog is the restriction on ‘sitting days’, the number of days Crown Courts operate a year. Around 130,000 sitting days are available to the courts, but, despite a capacity crisis, sitting days are restricted by around 20,000 a year.

Advertisement

While the government has rightly announced that it is increasing the number of sitting days by 5000, this is still a substantial shortfall. This inexplicable misuse of court time needs to be rectified.

The parliamentary timetable for these wrongheaded proposals is most likely to be the second half of next year, perhaps October or November. If the emergency is now, then why isn’t the justice secretary arguing for time on the floor of the House now?

Why, if it is so very urgent and just about reducing the backlog, won’t David Lammy put in a sunset clause on the face of the bill so that this policy can be scrapped once the backlog is down to a manageable number? At which time he himself would revert to saying that “criminal trials without juries are a bad idea, you don’t fix the backlog with trials that are widely perceived as unfair”.

Advertisement

And why not start using the promised £550 million for victims support service immediately? The government doesn’t need primary legislation for that. There isn’t a backlog in every court centre. Certain courts have managed to delete any backlog to manageable numbers by proactive case management. Let’s look at the model before we throw out the baby with the bath water. The government should reconsider this now, before lasting damage is done to public confidence in our courts, the justice system and this government.

Karl Turner is the Labour MP for Kingston-upon-Hull East

Mutated H3N2 flu virus is circulating – so should you buy a vaccine this year?

Flu has come early and experts predict it could be a particularly nasty season.

Some schools disrupted amid rise in flu cases

Flu is on the rise, but ministers say schools should only close in extreme circumstances.

Much of £11bn Covid scheme fraud ‘beyond recovery’, report says

The response to the pandemic led to “enormous outlays of public money which exposed it to the risk of fraud and error”, a report says.

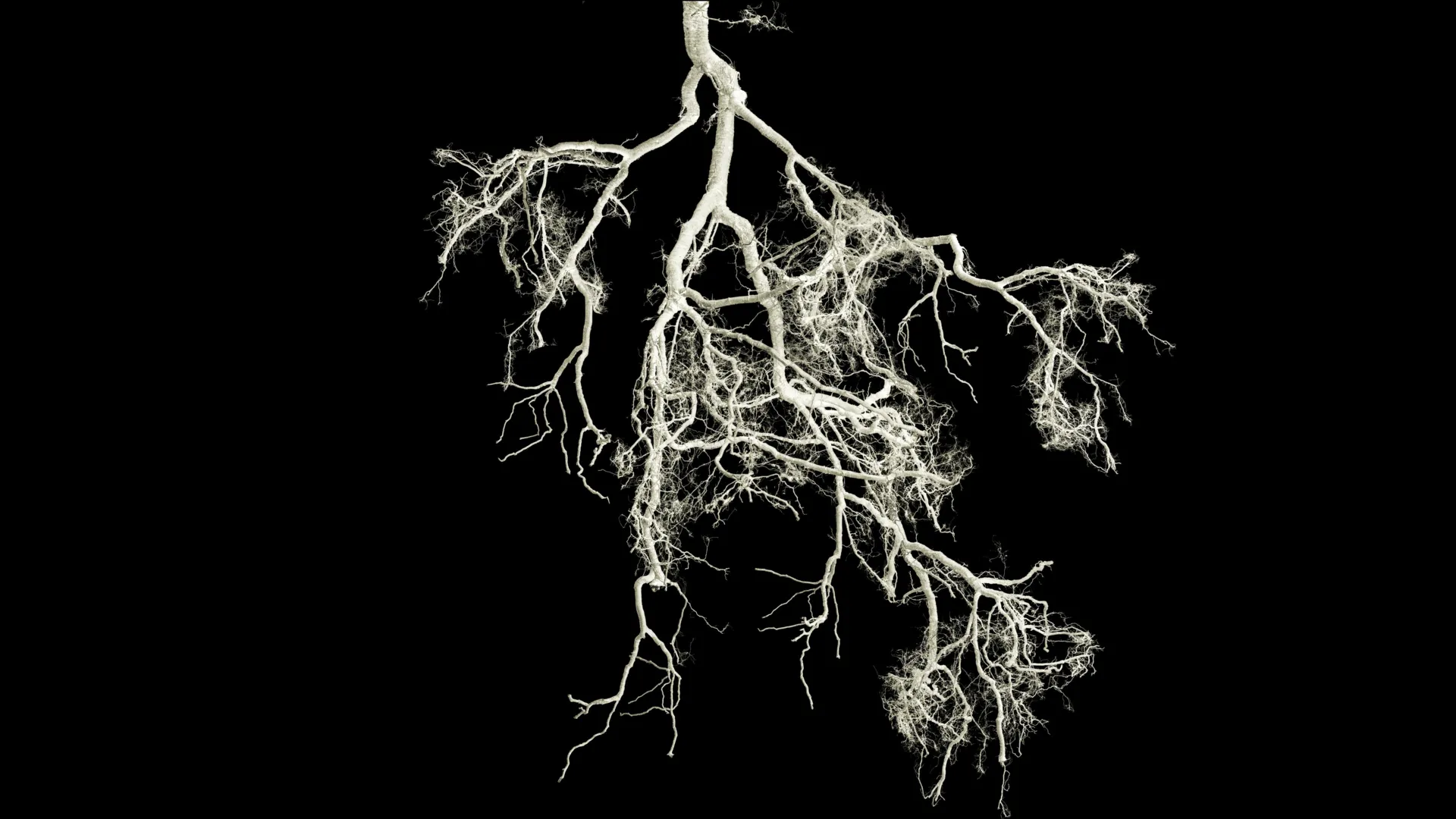

Small root mutation could make crops fertilize themselves

That is the conclusion reached by Kasper Røjkjær Andersen and Simona Radutoiu, professors of molecular biology at Aarhus University.

Their new research highlights an important biological clue that could help reduce agriculture’s heavy reliance on artificial fertilizer.

Plants require nitrogen to grow, and most crop species can obtain it only through fertilizer. A small group of plants, including peas, clover, and beans, can grow without added nitrogen. They do this by forming a partnership with specific bacteria that turn nitrogen from the air into a form the plant can absorb.

Unlocking the Secrets Behind Natural Nitrogen Fixation

Scientists worldwide are working to understand the genetic and molecular basis of this natural nitrogen-fixing ability. The hope is that this trait could eventually be introduced into major crops such as wheat, barley, and maize.

If achieved, these crops could supply their own nitrogen. This shift would reduce the need for synthetic fertilizer, which currently represents about two percent of global energy consumption and produces significant CO2 emissions.

Researchers at Aarhus University have now identified small receptor changes in plants that cause them to temporarily shut down their immune defenses and enter a cooperative relationship with nitrogen-fixing bacteria.

How Plants Decide Between Defense and Cooperation

Plants rely on cell-surface receptors to sense chemical signals from microorganisms in the soil.

Some bacteria release compounds that warn the plant they are “enemies,” prompting defensive action. Others signal that they are “friends” able to supply nutrients.

Legumes such as peas, beans, and clover allow specialized bacteria to enter their roots. Inside these root tissues, the bacteria convert nitrogen from the atmosphere and share it with the plant. This partnership, known as symbiosis, is the reason legumes can grow without artificial fertilizer.

Aarhus University researchers found that this ability is strongly influenced by just two amino acids, which act as small “building blocks” within a root protein.

“This is a remarkable and important finding,” says Simona Radutoiu.

The root protein functions as a “receptor” that reads signals from bacteria. It determines whether the plant should activate its immune system (alarm) or accept the bacteria (symbiosis).

The team identified a small region in the receptor protein that they named Symbiosis Determinant 1. This region functions like a switch that controls which internal message the plant receives.

By modifying only two amino acids within this switch, the researchers changed a receptor that normally triggers immunity so that it instead initiated symbiosis with nitrogen-fixing bacteria.

“We have shown that two small changes can cause plants to alter their behavior on a crucial point — from rejecting bacteria to cooperating with them,” Radutoiu explains.

Expanding the Potential to Major Food Crops

In laboratory experiments, the researchers successfully engineered this change in the plant Lotus japonicus. They then tested the concept in barley and found that the mechanism worked there as well.

“It is quite remarkable that we are now able to take a receptor from barley, make small changes in it, and then nitrogen fixation works again,” says Kasper Røjkjær Andersen.

The long-term potential is significant. If these modifications can be applied to other cereals, it may ultimately be possible to breed wheat, maize, or rice capable of fixing nitrogen on their own, similar to legumes.

“But we have to find the other, essential keys first,” Radutoiu notes.

“Only very few crops can perform symbiosis today. If we can extend that to widely used crops, it can really make a big difference on how much nitrogen needs to be used.”

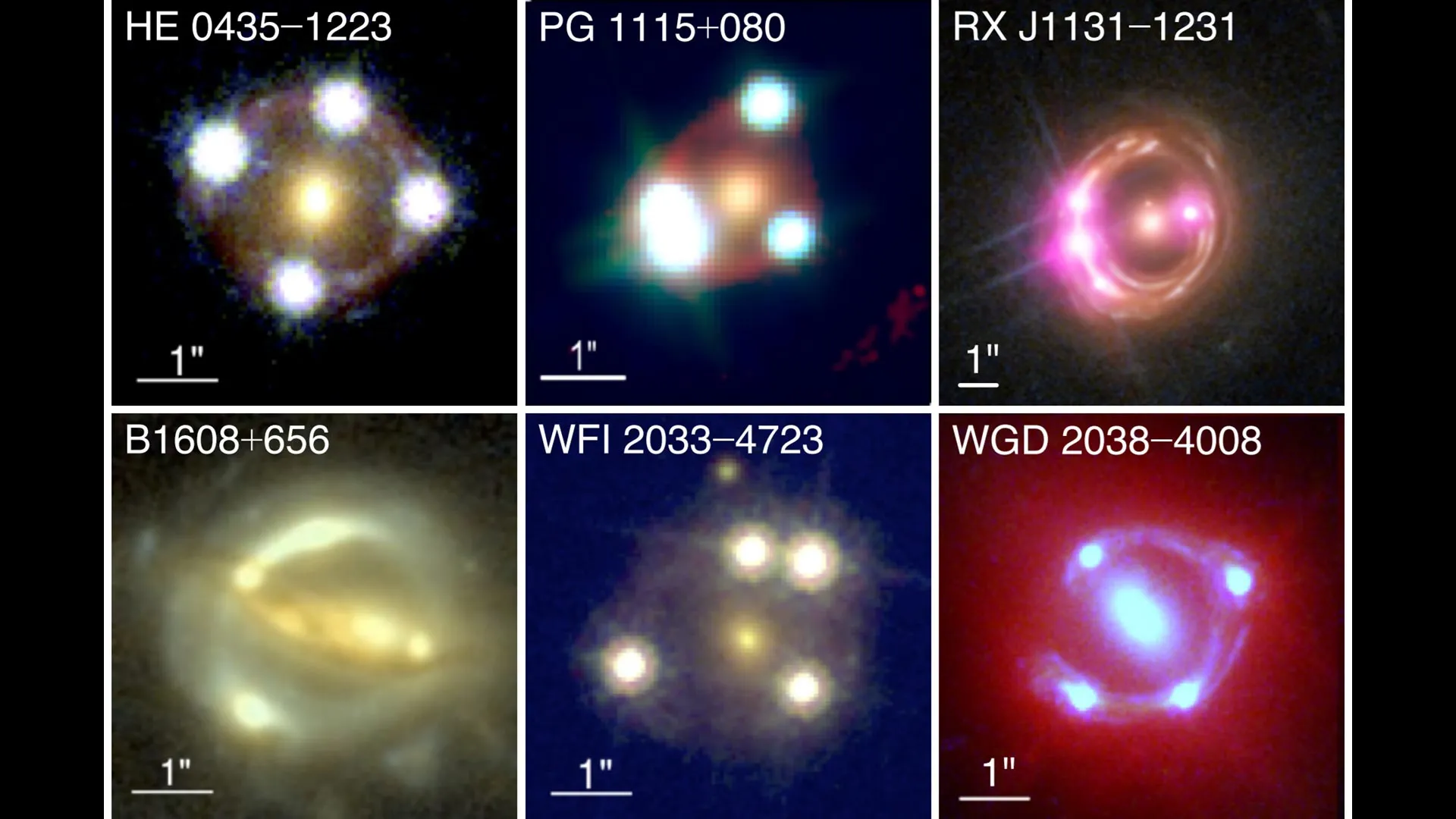

New cosmic lens measurements deepen the Hubble tension mystery

Cosmologists are grappling with a major unresolved puzzle: they do not all agree on how fast the universe is expanding, and solving this puzzle could point to new physics. To check for hidden errors in traditional measurements that rely on markers such as supernovae, astronomers continually look for fresh ways to track cosmic expansion. In recent work, researchers including scientists at the University of Tokyo measured the universe’s growth using new techniques and data from some of the most advanced telescopes available. Their approach takes advantage of the fact that light from extremely distant objects can travel to us along several different paths. Comparing these different routes helps refine models of what is happening on the very largest scales in the universe, including how space itself is stretching.

How fast is the universe expanding?

We know that the universe is enormous, and it is steadily growing larger. Its exact size is unknown, but its rate of expansion can be measured. This turns out to be more complicated than it sounds, because the expansion appears faster when we look at more distant regions of space. For every 3.3 million light years (or one megaparsec) of distance from Earth, objects at that distance appear to be moving away from us at about 73 kilometers per second. Put another way, the universe expands at 73 kilometers per second per megaparsec (km/s/Mpc), a value known as the Hubble constant.

Distance ladders and a new way to measure the Hubble constant

Scientists have developed several methods to estimate the Hubble constant, but until now they have all relied on so-called distance ladders. These ladders are built from objects such as supernovae and special stars called Cepheid variable stars. Because these objects are considered well understood, astronomers assume that even when they are observed in other galaxies, they can be used to estimate distances with high precision. Over decades of observations of many such objects, the allowed range for the Hubble constant has become narrower. However, some uncertainty has always remained about how reliable this approach is, so cosmologists are eager to test alternatives.

In their latest study, a team of astronomers that includes Project Assistant Professor Kenneth Wong and postdoctoral researcher Eric Paic from the University of Tokyo’s Research Center for the Early Universe has successfully demonstrated a technique called time-delay cosmography. They argue that this method can reduce the field’s dependence on distance ladders and could also have valuable applications in other branches of cosmology.

Using gravitational lensing as a cosmic measurement tool

“To measure the Hubble constant using time-delay cosmography, you need a really massive galaxy that can act as a lens,” said Wong. “The gravity of this ‘lens’ deflects light from objects hiding behind it around itself, so we see a distorted version of them. This is called gravitational lensing. If the circumstances are right, we’ll actually see multiple distorted images, and each will have taken a slightly different pathway to get to us, taking different amounts of time. By looking for identical changes in these images that are slightly out of step, we can measure the difference in time they took to reach us. Coupling this data with estimates on the distribution of the mass of the galactic lens that’s distorting them is what allows us to calculate the acceleration of distant objects more accurately. The Hubble constant we measure is well within the ranges supported by other modes of estimation.”

The Hubble tension: conflicting views of the expanding universe

It may seem puzzling that researchers invest so much effort to refine a number that has already been measured many times. The reason is that this value sits at the heart of how scientists reconstruct the history and evolution of the universe, and there is a serious unresolved discrepancy. The value of 73 km/s/Mpc for the Hubble constant agrees with observations of relatively nearby objects. However, there are other ways to infer the cosmic expansion rate that look much farther back in time. One key method uses the radiation that fills the universe and traces back to the big bang, known as the cosmic microwave background (CMB). When scientists analyze the CMB to estimate the Hubble constant, they obtain a lower value of 67 km/s/Mpc.

This mismatch between 73 km/s/Mpc and 67 km/s/Mpc is called the Hubble tension. The work by Wong, Paic and their colleagues helps illuminate what might be causing this tension, at a time when it is still unclear whether the discrepancy is simply due to experimental uncertainties or points to something deeper.

Is the Hubble tension pointing to new physics?

“Our measurement of the Hubble constant is more consistent with other current-day observations and less consistent with early-universe measurements. This is evidence that the Hubble tension may indeed arise from real physics and not just some unknown source of error in the various methods,” said Wong. “Our measurement is completely independent of other methods, both early- and late-universe, so if there are any systematic uncertainties in those methods, we should not be affected by them.”

“The main focus of this work was to improve our methodology, and now we need to increase the sample size to improve the precision and decisively settle the Hubble tension,” said Paic. “Right now, our precision is about 4.5%, and in order to really nail down the Hubble constant to a level that would definitively confirm the Hubble tension, we need to get to a precision of around 1-2%.”

More lenses, more quasars, and higher precision

The researchers are optimistic that they can reach this higher level of accuracy. In the current study, they analyzed eight time-delay lens systems. Each system contains a foreground galaxy that acts as a lens and blocks our direct view of a distant quasar (a supermassive black hole that is accreting gas and dust, causing it to shine brightly). They also incorporated new observations from cutting-edge space-based and ground-based observatories, including the James Webb Space Telescope. Looking ahead, the team plans to expand the number of lens systems they study, refine their measurements, and carefully identify or eliminate any remaining systematic sources of error.

Mass distribution uncertainties and a global cosmology effort

“One of the largest sources of uncertainty is the fact that we don’t know exactly how the mass in the lens galaxies is distributed. It is usually assumed that the mass follows some simple profile that is consistent with observations, but it is hard to be sure, and this uncertainty can directly influence the values we calculate,” said Wong. “The Hubble tension matters, as it may point to a new era in cosmology revealing new physics. Our project is the result of a decades-long collaboration between multiple independent observatories and researchers, highlighting the importance of international collaboration in science.”

Funding: This work was supported by NASA (grants 80NSSC22K1294 and HST-AR-16149), the Max Planck Society (Max Planck Fellowship), the Deutsche Forschungsgemeinschaft under Germany’s Excellence Strategy (EXC-2094, 390783311), the U.S. National Science Foundation (grants NSF-AST-1906976, NSF-AST-1836016, NSF-AST-2407277), the Moore Foundation (grant 8548), and JSPS KAKENHI (grant numbers JP20K14511, JP24K07089, JP24H00221).

This surprising discovery rewrites the Milky Way’s origin story

A new investigation is offering fresh insight into how galaxies like the Milky Way take shape, evolve over time, and develop unexpected chemical patterns in their stars.

Published in Monthly Notices of the Royal Astronomical Society, the study examines the origin of a long-standing mystery within the Milky Way: two clearly defined groups of stars with different chemical signatures, a feature known as the “chemical bimodality.”

When researchers look at stars located near the Sun, they consistently identify two major categories based on the relative amounts of iron (Fe) and magnesium (Mg) they contain. These categories create two separate “sequences” on chemical plots, even though they overlap in metallicity (how rich they are in heavy elements like iron). This unusual split has puzzled astronomers for years.

Simulations Reveal How the Chemical Split May Form

To investigate why this structure appears, researchers from the Institute of Cosmos Sciences of the University of Barcelona (ICCUB) and the Centre national de la recherche scientifique (CNRS) used advanced computer models (called the Auriga simulations) to recreate the formation of Milky Way-like galaxies inside a virtual universe. By examining 30 simulated galaxies, the team searched for processes that might shape these chemical sequences.

Gaining a clearer picture of the Milky Way’s chemical development helps scientists understand how our galaxy, along with others, assembled over cosmic time. This includes Andromeda, the Milky Way’s nearby companion galaxy, where no similar chemical bimodality has been identified so far. Insights from this work also shed light on early-universe conditions and the roles of gas flows and past mergers.

“This study shows that the Milky Way’s chemical structure is not a universal blueprint,” said lead author Matthew Orkney, a researcher at ICCUB and the Institut d’Estudis Espacials de Catalunya (IEEC).

“Galaxies can follow different paths to reach similar outcomes, and that diversity is key to understanding galaxy evolution.”

Multiple Routes to the Milky Way’s Dual Chemical Structure

The results indicate that galaxies resembling the Milky Way can form two distinct chemical sequences through several different pathways. One possibility is a cycle of intense star formation followed by calmer periods. Another involves variations in the gas streaming into a galaxy from its surroundings.

The study also challenges an earlier explanation involving a smaller galaxy known as Gaia-Sausage-Enceladus (GSE). While this past collision influenced the Milky Way, the simulations show it is not required to produce the chemical split. Instead, metal-poor gas from the circumgalactic medium (CGM) appears to play a central role in creating the second branch of stars.

The researchers found that the specific shape of the two chemical sequences is tightly connected to the galaxy’s star formation history.

New Observations Will Help Test These Predictions

As observatories such as the James Webb Space Telescope (JWST) and future missions like PLATO and Chronos gather more precise data, scientists will be able to test these simulation predictions and refine models of how galaxies evolve.

“This study predicts that other galaxies should exhibit a diversity of chemical sequences. This will soon be probed in the era of 30m telescopes where such studies in external galaxies will become routine,” said Dr. Chervin Laporte, of ICCUB-IEEC, CNRS-Observatoire de Paris and Kavli IPMU.

“Ultimately, these will also help us further refine the physical evolutionary path of our own Milky Way.”